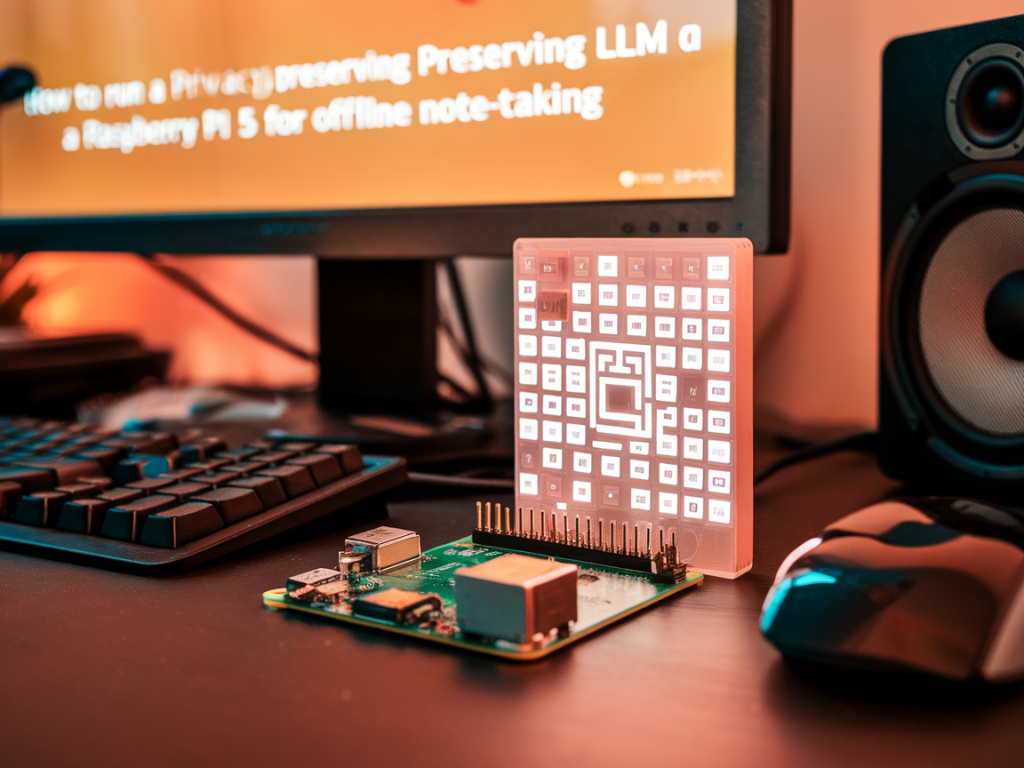

I wanted a private, offline note-taking assistant that I could carry around on a cheap, low-power device. The Raspberry Pi 5—when paired with the right model and software—lets you do exactly that: run a local language model that summarizes, tags, and searches your notes without ever sending data to the cloud. I built one, iterated a few times, and in this article I’ll walk you through the practical choices, performance trade-offs, and step-by-step setup so you can do the same.

Why run an LLM on a Raspberry Pi 5?

There are three reasons I care about this setup:

What you’ll need (hardware and software)

Here are the components I recommend. I tested with the 8GB Pi 5; 4GB is possible but you’ll be very constrained, and 16GB is ideal if you want larger models.

| Component | Recommendation | Why |

|---|---|---|

| Raspberry Pi 5 | 8GB or 16GB | Enough RAM for 7B-class quantized models and decent caching |

| Storage | NVMe SSD via USB4/PCIe adapter | Models are large; SSD makes startup and swapping acceptable |

| Power | Official USB-C PSU | Stable power for consistent performance |

| OS | Ubuntu 24.04 64-bit or Raspberry Pi OS 64-bit | Better toolchain and 64-bit address space for models |

Choosing a model: size, license, and quantization

On-device LLMs rely on two levers: model size and quantization. The sweet spot for the Pi 5 is typically 6–7B models in a 4-bit quantized format (ggml/q4_*). These strike a good balance between capability and memory footprint.

Software stack I used

My stack is intentionally minimal and well-supported:

Step-by-step setup (high level)

This is the condensed path I followed. Exact commands depend on the OS choice but this is representative for Ubuntu 24.04 ARM64.

sudo apt update && sudo apt upgrade -y

sudo apt install -y git build-essential cmake python3-pip python3-venv libssl-dev

git clone https://github.com/ggerganov/llama.cpp.gitcd llama.cppmake clean && make -j4

Note: the makefile auto-detects ARM and will build with NEON where possible.Either download a community pre-quantized ggml/gguf model (search "ggml-7b-q4") or convert a PTH/ggml file using the conversion tools included in the repo. Keep the model on the SSD to avoid SD-card I/O limits.

python3 -m venv venvsource venv/bin/activatepip install wheelpip install llama-cpp-python

Then point the binding to the model path in your code.python -c "from llama_cpp import Llama; m=Llama(model_path='path/to/ggml-model-q4.bin'); print(m('Summarize: I like apples', max_tokens=64))"

Integrating with your note app

I chose Obsidian for daily notes because it’s local-first and supports community plugins. For automation I run a small FastAPI service on the Pi that accepts a note, calls the LLM to summarize or tag it, and returns metadata to be saved alongside the note. This keeps the UI decoupled from the model code and makes it easy to swap models later.

Performance tips and gotchas

Security and privacy considerations

Running everything locally solves many privacy risks, but there are still important steps to harden the box:

Limitations and trade-offs

On-device LLMs on tiny hardware are a set of trade-offs:

Running a privacy-preserving LLM on a Raspberry Pi 5 for offline note-taking is practical today. It’s not for every workflow—if you need state-of-the-art creative writing or massive context windows, cloud models still win—but for private, reliable note summarization and tagging, a Pi-based assistant works surprisingly well. I’ve used mine for weeks, iterated on quantization and caching, and it now sits in my home office making notes searchable and useful without touching the cloud.